VIVERSE’s Approach to AI: Transparent, Ethical, and Privacy-First

AI is everywhere right now. So is the anxiety around it. If you’ve wondered whether a platform you’re using is training AI on your data, you’re hardly alone. Let’s answer it plainly: VIVERSE does NOT use your user data or content to train AI models.

That’s the core promise behind this guide. We’re not going to hide behind vague “privacy-first” language. We’re going to tell you what our AI-labeled tools do, what they do not do, and where the boundaries are. If you want the official wording, it’s in our updated Terms of Use, and we’ll point you there again at the end.

TL;DR

You keep your rights to what you create. Our license only covers what’s needed to host and display content. AI training is not the default. If anything ever involves training, it will require your explicit opt-in.

Now let’s clear up the “AI” label, because that word gets messy, and it gets there fast.

Quick clarity: AI vs machine learning

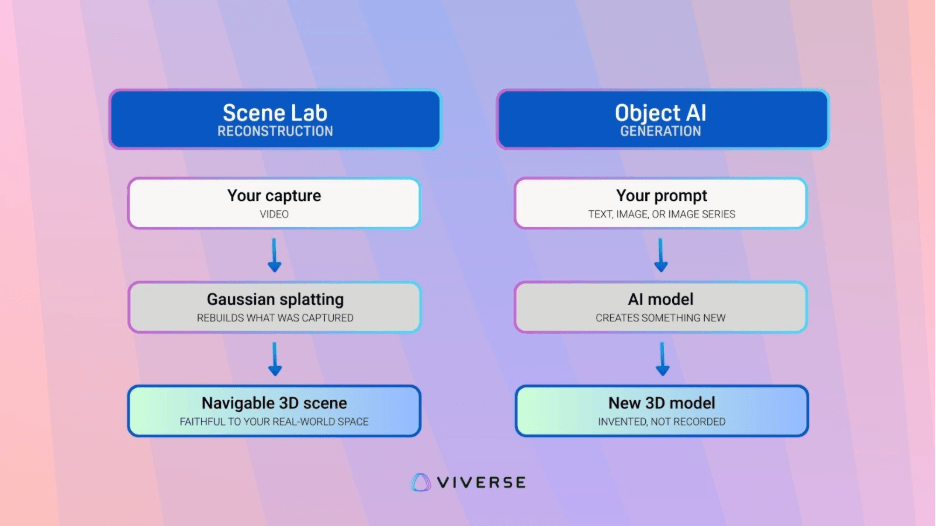

People say “AI,” but they usually mean one of two things. First, generative AI, which makes new content like images or 3D objects. Second, machine learning reconstruction, which converts what you captured into a usable format. Both are “AI” in casual conversation, but they are not the same thing.

Some ‘AI’ tools are generative, meaning they create something new from a prompt. Others are reconstruction workflows, meaning they rebuild what you captured, like a Gaussian splat 3D reconstruction from video. Both get labeled ‘AI,’ but they raise different questions, which is why we’ll call out what each tool is doing and what data it actually needs.

This difference matters because it changes the trust question. With generative tools, you wonder where the training data came from. With reconstruction tools, you wonder what happens to your footage after processing. So, when we explain our tools, we’ll be specific about what kind of system it is, and we’ll be specific about the boundaries we follow.

Our stance: creator ownership and opt-in AI training

We published a Terms of Use update that spells out the creator-first basics. You keep your rights to your work. Our platform license is limited to hosting and displaying your content. It’s also explicit about AI protections: your data is yours, and VIVERSE will NOT use your content to train AI models.

That last part is the big one. It means “training” is not something buried in fine print. It’s a choice you would have to make on purpose. This is how we want trust to work, especially when creators are experimenting and iterating fast.

Under the hood: what each AI service does, and what it does not do

We have a couple of services that people will call “AI.” Some are reconstruction workflows. Some include generative output. Instead of treating them all the same, here’s a clear breakdown to clarify what they are, and what they do.

VIVERSE Scene Lab: 3D reconstruction from your capture

Scene Lab is built for turning real-world capture into usable 3D content, without asking you to rebuild a space by hand. You capture video, Scene Lab does the heavy lifting, and you end up with a 3D scene you can use and reuse as you see fit; it’s yours, after all. It’s powered by machine learning, but it’s not doing the kind of “creative invention” people usually associate with generative AI.

Under the hood, Scene Lab uses Gaussian splatting, a machine learning technique for reconstructing a scene from images or video. The result is a navigable 3D environment built from what your camera captured. Like other modern reconstruction approaches, it gets grouped under “AI” because it relies on machine learning, but the key point is that this is reconstruction, not generation. It’s rebuilding what you recorded, not inventing details you never recorded.

Because it’s reconstruction, the privacy questions are different, and we want to be clear about the boundaries. Scene Lab doesn’t need to learn from your personal platform behavior to do its job, nor does it “improve itself” by quietly absorbing user uploads. In the same spirit as our overall AI stance, the output you create through these tools isn’t treated as training fuel. Should anything ever involve training, it would require explicit opt-in; that decision will never be made for you.

VIVERSE Object AI: 3D generation with clear training boundaries

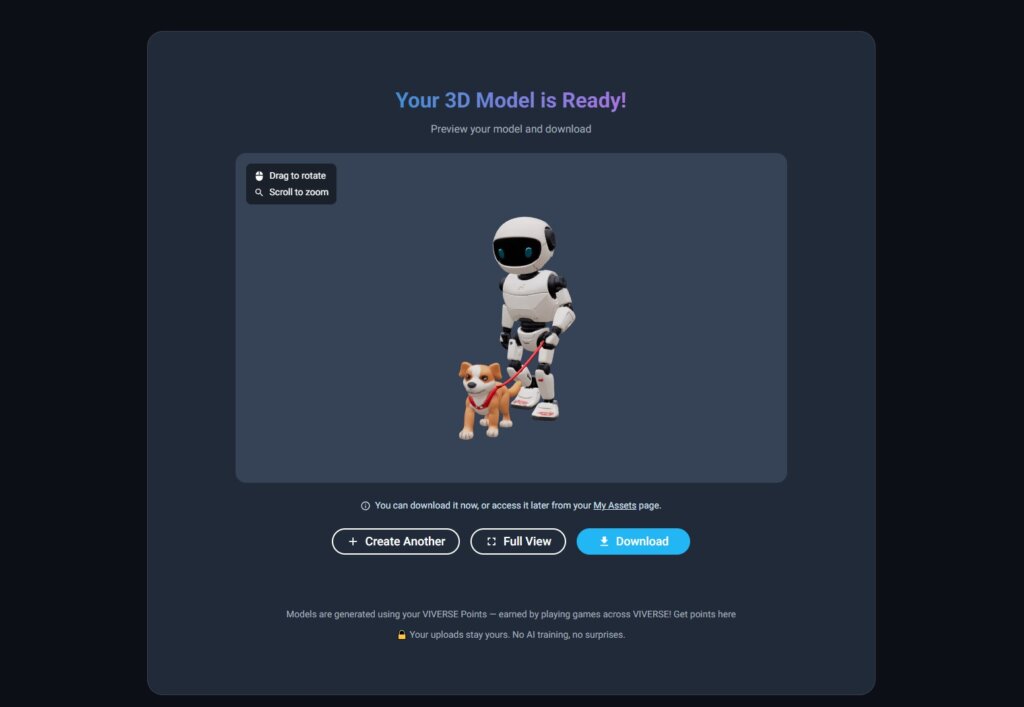

Object AI is the generative side of the toolkit. It takes input like text, image, or series of images, and produces a 3D model you can use in your workflow. This is the part where people most often ask, “Wait, are my uploads training the model?” Totally fair question.

On the Object AI product page, we answer that directly. Neither VIVERSE nor our AI provider Tripo3D will use your uploads or generated content for training purposes. The same page also states you own the 3D models you create. That combination matters. It means you can prototype quickly without donating your work to a training pipeline.

This also lines up with the broader Terms stance. AI training is not something that silently happens because you used a tool. If anything involves training, it requires explicit opt-in, and we won’t change our minds about that.

Creative work is human work, and AI tools should simply be tools

We like the phrase “creative work is human work” because it’s a simple gut-check. AI should reduce friction in the parts you don’t want to do, if you choose to use it at all. It shouldn’t quietly absorb your style, your assets, or your outputs into a model you never agreed to train.

That’s what we’re building toward with our approach. Scene Lab helps you capture reality into 3D faster. Object AI helps you prototype 3D assets faster. You still make the creative calls. You still own the work you produce.

Creator-first, in practice

If this guide did its job, you should feel like you have a clear understanding about our approach regarding AI and your content. You know which tools are reconstruction vs. generation, what’s powering them, and what boundaries apply. That’s what “transparent” should mean right now, especially when creators are being asked to trust platforms with their work.

Still have questions?

Want the official policy language and the clearest tool-specific details? Start with our Terms of Use.

If you spot a question we didn’t answer, tell us, and we’ll answer it next!

Bluesky | X / Twitter | YouTube | LinkedIn | Instagram | Facebook | TikTok